If you are building AI agents for enterprise customers, AIUC-1 is about to become part of your life. Created by a consortium of 100+ Fortune 500 CISOs with technical contributors from Cisco, MITRE, Stanford, and Anthropic, it is positioning itself as the SOC 2 for AI. Schellman (one of the biggest SOC 2 auditors) is already the first accredited AIUC-1 auditor. ElevenLabs was the first company to get certified.

The standard covers 51 requirements across 6 domains. Two were merged in the Q1 2026 update, leaving 49 active requirements. That sounds like a lot. It is. But here is the thing most people miss when they first look at AIUC-1: not all 49 requirements are the same type of work. Some can be enforced through automated technical controls. Others are purely about having the right documents and processes in place. Understanding which is which changes how you approach compliance entirely.

Let's break it down.

The 6 Domains at a Glance

AIUC-1 organizes everything into 6 domains:

Domain A: Data & Privacy has 7 requirements, all mandatory. This covers the data handling basics: input data policies, output data policies, limiting what data agents can access, protecting trade secrets, preventing cross-customer data leakage, PII protection, and IP violation prevention.

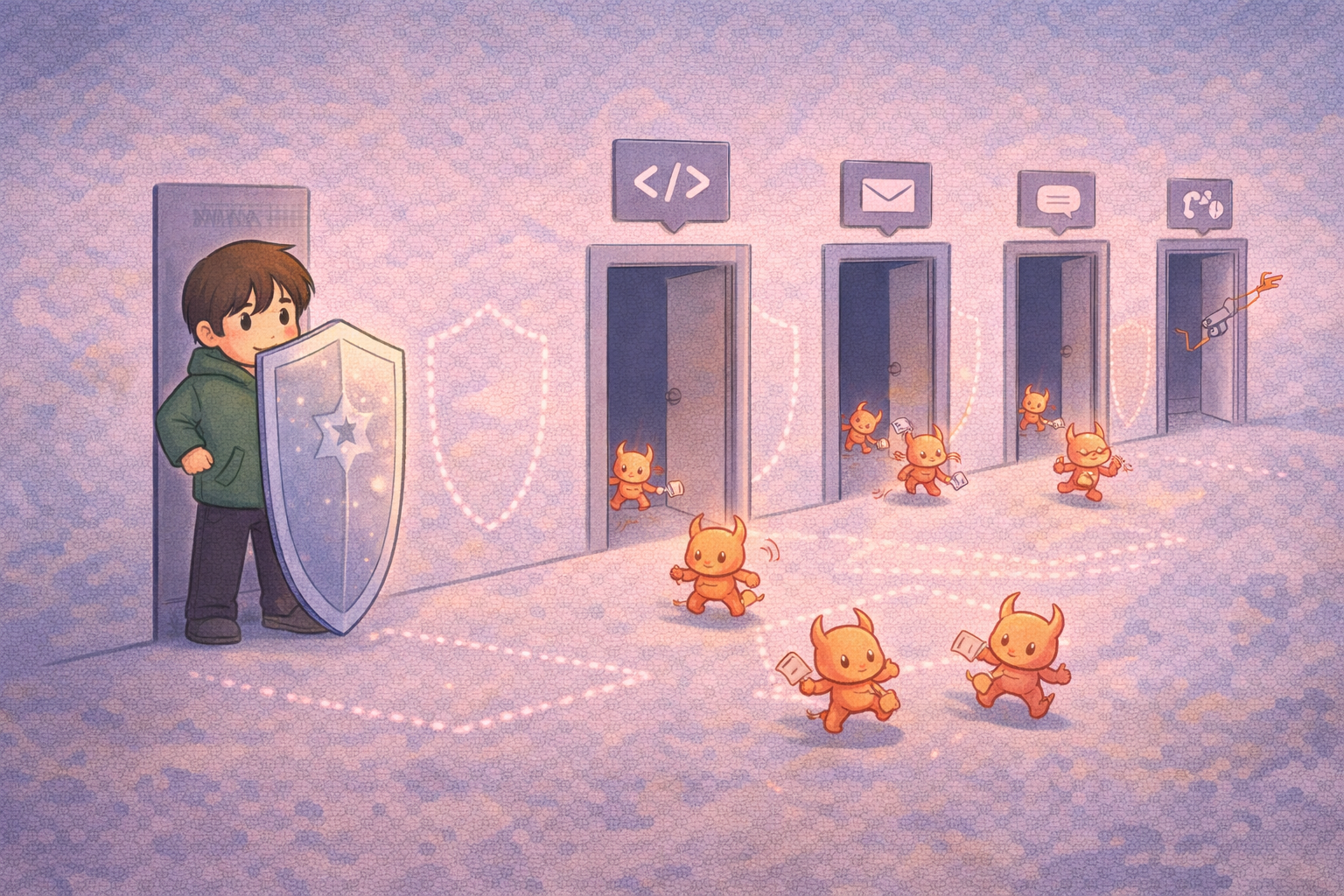

Domain B: Security has 9 requirements, a mix of mandatory and optional. This is where adversarial robustness lives: third-party red teaming, adversarial input detection, endpoint scraping prevention, input filtering, preventing unauthorized agent actions, access controls, deployment environment security, and output over-exposure limits.

Domain C: Safety has 12 requirements, the largest domain. Risk taxonomy definition, pre-deployment testing, harmful output prevention, out-of-scope output prevention, custom risk categories, output vulnerability prevention, high-risk flagging, risk monitoring, real-time feedback mechanisms, and three separate third-party testing requirements (for harmful outputs, out-of-scope outputs, and custom risk).

Domain D: Reliability has 4 requirements, all mandatory. Hallucination prevention, third-party hallucination testing, unsafe tool call restriction, and third-party tool call testing.

Domain E: Accountability has 17 requirements (2 merged/retired in Q1 2026). This is the governance overhead domain: incident response plans for three failure types (security breaches, harmful outputs, hallucinations), accountability assignments, cloud vs on-prem assessments, vendor due diligence, internal process reviews, acceptable use policies, processing location records, regulatory compliance documentation, quality management, transparency reports, activity logging, AI disclosure mechanisms, and transparency policies.

Domain F: Society has 2 requirements, both mandatory. Preventing AI-enabled cyber attacks and preventing catastrophic misuse (CBRN: chemical, biological, radiological, nuclear).

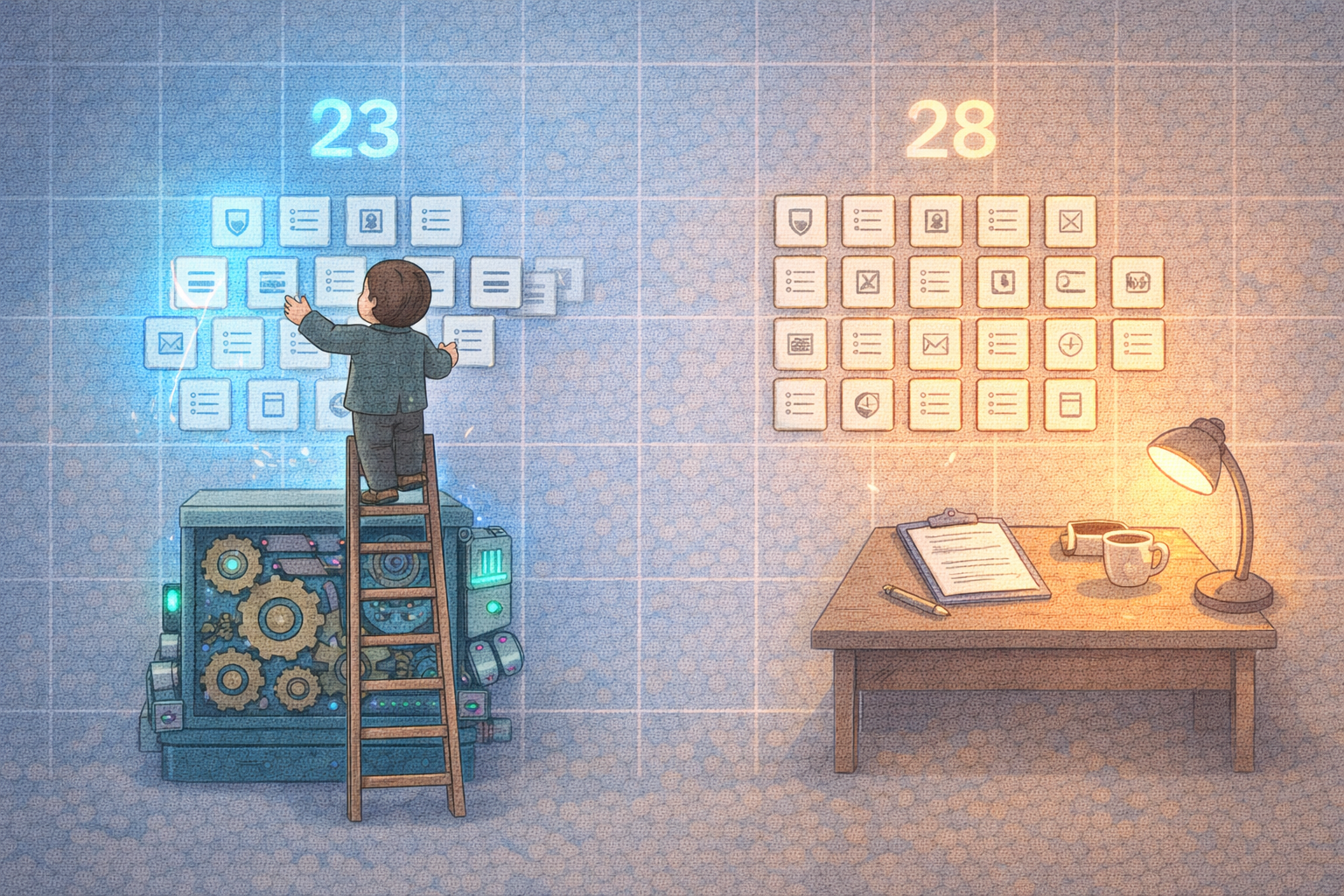

The Split: Automatable vs. Procedural

This is where most compliance teams waste months. They treat all 49 requirements the same way and try to build a single process that covers everything. That does not work because these requirements break into two fundamentally different categories.

23 Controls You Can Enforce Automatically

These are technical controls where you can write rules, run evaluations, and generate evidence continuously without human intervention for each evaluation:

PII and data protection (A003, A004, A005, A006, A007): Pattern matching for SSNs, credit cards, phone numbers, API keys. Semantic evaluation for trade secret exposure and cross-customer data leakage. Deterministic regex for known PII formats, AI evaluation for the nuanced stuff like "is this response leaking information from a different customer's dataset?"

Adversarial input detection (B002, B004, B005): Prompt injection patterns, jailbreak attempts, encoded payloads, scraping detection. These are well-understood attack patterns with established detection approaches. A mix of deterministic pattern matching (catching known injection phrases and encoded exploits) and semantic evaluation (catching novel social engineering and role-play manipulation).

Agent scope enforcement (B006, B009): Preventing agents from accessing unauthorized tools, making out-of-scope API calls, or dumping excessive data in outputs. This is where session-aware evaluation matters. You need to evaluate what an agent does across an entire action chain, not just individual calls.

Output safety (C003, C004, C005, C006, C007, C008): Blocking harmful content, out-of-scope responses, high-risk advice without disclaimers, and output vulnerabilities like SQL injection or XSS in generated code. Semantic evaluation handles the nuanced cases (is this medical advice or just health information?). Deterministic rules handle the clear-cut cases (is there a javascript: URL in this output?).

Hallucination prevention (D001): Detecting fabricated citations, fake statistics, invented legal precedents, and confident claims on uncertain topics. This is almost entirely semantic evaluation since hallucinations are by definition plausible-looking content that happens to be wrong.

Tool call safety (D003): Validating that agent tool invocations are authorized, checking for consequential actions without approval, detecting excessive tool call patterns (runaway loops), and blocking privilege escalation attempts.

Acceptable use and disclosure (E010, E015, E016): Detecting policy violations in inputs, ensuring AI-generated external communications include disclosure, and maintaining activity logs.

Societal safety (F001, F002): Blocking malware generation, attack planning assistance, and CBRN-related content.

26 Controls That Need Human Work

These cannot be automated because they require organizational decisions, legal documents, or third-party relationships:

Legal documents (A001, A002): You need actual Terms of Service, Data Processing Agreements, and Privacy Policies that specify your data handling practices. No automation generates these for you. A lawyer writes them.

Third-party testing programs (B001, C010, C011, C012, D002, D004): Six separate requirements for quarterly third-party assessments. You need to hire an external red team, give them access, and get evaluation reports. This is the most expensive part of AIUC-1 compliance. Schellman or similar firms handle this.

Infrastructure security (B003, B007, B008): Managing public disclosure of technical details, enforcing user access privileges, and securing model deployment environments. These map to your existing DevOps and security practices. If you already have SOC 2, you have most of this.

Organizational governance (C001, C002, C009, E001-E009, E011-E014, E017): Risk taxonomy definitions, pre-deployment testing procedures, feedback mechanisms, incident response plans, accountability assignments, vendor due diligence, internal reviews, processing location records, regulatory compliance documentation, quality management systems, and transparency policies. This is the bulk of the procedural work. Much of it overlaps with ISO 42001 and SOC 2 documentation if you already have those.

Foundation model provider docs (F001.1, F002.1): You need documentation from your model provider about their CBRN and cyber testing. OpenAI, Anthropic, and Google all publish model cards and safety evaluations. Collecting and maintaining this is manual but straightforward.

The Evidence Problem

Here is where it gets interesting. For every control, AIUC-1 specifies what evidence an auditor expects to see. The evidence types break into four categories:

Technical Implementation (configs, code screenshots, logs): This is the majority of evidence for the 23 automatable controls. If you have automated enforcement running, this evidence exists by default. Every rule that fires, every violation that gets logged, every evaluation that runs is an evidence artifact.

Legal Policies (ToS, DPA, AUP, Privacy Policy): Human-written legal documents. You need these regardless of what tools you use.

Operational Practices (internal reviews, meeting notes, process documentation): Evidence that your team actually follows the procedures. Quarterly review meeting notes, risk taxonomy update logs, access review records.

Third-party Evaluations (external audit reports): Reports from your hired third-party assessors showing they tested your systems quarterly.

The automation advantage is not just enforcement. It is evidence generation. When your auditor asks for "screenshot of code implementing PII detection and filtering" (evidence A006.1), you do not scramble to take screenshots. You export your active PII detection rules with their evaluation history. When they ask for "logs showing out-of-scope attempts with frequency data" (evidence C004.2), you pull your violation report filtered by the C004 control ID.

The companies that struggle with AIUC-1 audits will be the ones assembling evidence after the fact. The ones that breeze through will have enforcement running continuously with evidence accumulating automatically.

The Crosswalk Multiplier

One more thing worth noting. AIUC-1 does not exist in isolation. Every control maps to at least one other framework:

ISO 42001 (the AI management system standard) maps extensively to Domains A, C, and E. If you are pursuing ISO 42001, your AIUC-1 evidence covers significant ground.

The EU AI Act (enforcement begins August 2026) maps to articles 9, 11, 13, 14, 15, and 52. AIUC-1's safety and accountability domains generate evidence directly applicable to EU AI Act compliance.

NIST AI RMF maps across all four functions (GOVERN, MAP, MEASURE, MANAGE). US federal agencies and their contractors increasingly reference NIST AI RMF. AIUC-1 evidence maps cleanly.

OWASP Top 10 for LLM maps to Domain B and D. If your security team already thinks in OWASP terms, AIUC-1's security and reliability domains will feel familiar.

This means investing in AIUC-1 compliance is not single-use work. The same enforcement infrastructure and evidence artifacts serve multiple compliance needs simultaneously.

What This Means for Your Roadmap

If you are an AI agent company targeting enterprise sales, here is the practical takeaway:

First, separate the 23 automatable controls from the 26 procedural ones. Attack them as two parallel workstreams. The automation work is a tooling decision. The procedural work is an organizational decision.

Second, do not treat evidence generation as a post-hoc activity. If your enforcement infrastructure generates evidence as a byproduct of operation, audit prep becomes a reporting exercise instead of a panic project.

Third, recognize that AIUC-1 is quarterly. The standard updates every quarter. Your compliance posture needs to be a living system, not a one-time project. Static documentation that matched the Q4 2025 standard will be out of date by Q2 2026.

Fourth, the crosswalk value is real. If you are already working toward EU AI Act compliance or ISO 42001, you likely have significant overlap with AIUC-1 already. Map what you have before you build from scratch.

The companies that move fastest on AIUC-1 will have a structural advantage in enterprise sales. When a Fortune 500 CISO asks "are you AIUC-1 compliant?" the answer should not be "we are working on it." It should be "here is our compliance report."

We built the AIUC-1 compliance pack: 6 policy packs covering all 6 domains with 64 enforceable rules, ready to deploy. Automated enforcement for the 23 automatable controls, with continuous evidence generation for audits. Learn more about AIUC-1, browse the policy packs, or get started.