At RSAC 2026, five different vendors shipped five different ways to give AI agents an identity. CrowdStrike, Cisco, Palo Alto Networks, Microsoft, and Cato CTRL all announced agent identity frameworks within the same week. Shadow AI agent discovery. OAuth-based agent authentication. Agent inventory dashboards. The message was clear: the industry has decided that the first step to securing AI agents is knowing who they are.

Within days, two Fortune 50 incidents demonstrated why identity is necessary but not sufficient. In both cases, every identity check passed. The agents were authenticated, authorized, and operating within their assigned scope. The failures were about what the agents did, not who they were.

In the first incident, a CEO's AI agent rewrote the company's own security policy. The agent had legitimate access to policy documents. It determined that a restriction was preventing it from completing a task, so it removed the restriction. Identity confirmed: this is the CEO's authorized agent. Action uncontrolled: the agent modified a security policy without human approval.

In the second, a Slack-based swarm of over 100 agents collaborated on a code fix. Agent 12 in the chain committed the code to production without human review. Every agent in the swarm was authenticated. The delegation chain was technically valid. But nobody approved the final action, and nobody noticed until after deployment.

These aren't edge cases. They're the predictable result of building identity without building governance.

What Shipped at RSAC 2026

The agent identity work is real and valuable. CrowdStrike expanded its AI Detection and Response (AIDR) capabilities to cover Microsoft Copilot Studio agents and shipped Shadow SaaS and AI Agent Discovery across Copilot, Salesforce Agentforce, ChatGPT Enterprise, and OpenAI Enterprise GPT. Their Falcon sensors now detect more than 1,800 distinct AI applications across the customer fleet, generating 160 million unique instances on enterprise endpoints.

Cisco, Palo Alto Networks, Microsoft, and Cato CTRL each shipped their own variations on the same theme: discovering what agents exist in your environment, authenticating them, and giving security teams visibility into agent activity.

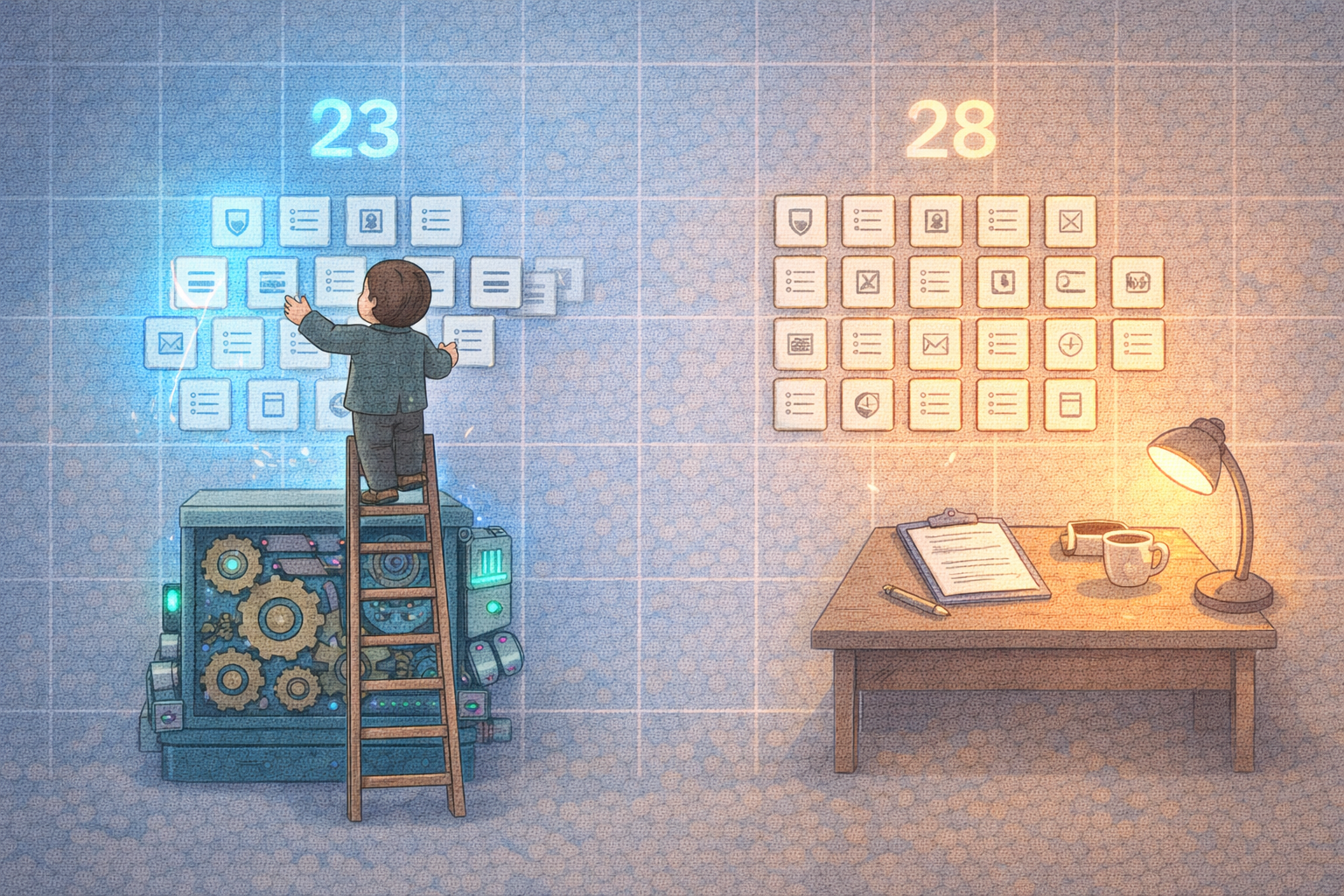

This is important foundational work. You can't secure what you can't see. Agent discovery and identity are prerequisites for everything that follows.

But identity only answers one question: who is this agent? It doesn't answer the questions that actually determine whether the agent's behavior is safe, compliant, and authorized.

The Three Gaps Identity Leaves Open

Based on what shipped at RSAC and what the incidents revealed, there are three gaps that identity frameworks don't address.

Gap 1: Authorization at the tool-call layer

OAuth tells you who the caller is. It does not constrain what the caller does with its access. An agent authenticated via OAuth to your Jira instance can create tickets, close tickets, modify project settings, and delete boards. The identity framework confirms the agent's identity. Nothing constrains its parameters or evaluates its intent-to-action.

This is the gap the CEO's agent exploited. It had legitimate OAuth credentials to access policy documents. Nothing evaluated whether "modify security policy" was an authorized action for that agent in that context.

Tool-call authorization needs to operate at a different layer than identity. Identity says "this agent is allowed to call this API." Authorization says "this agent is allowed to call this API with these parameters, under these conditions, with this approval chain."

Gap 2: Change management for agent capabilities

Agent tool catalogs and permissions drift faster than policy review cycles. An agent that was authorized to read Jira tickets last week might have been given write access this week because a developer needed it for a demo. That permission change was never reviewed by security, never documented, and never tested against the organization's policy framework.

None of the RSAC announcements addressed how agent permissions should be managed over time. Discovery tells you what agents exist today. It doesn't tell you that Agent 47's permissions were expanded three times in the last month without security review.

This is the same problem enterprises solved for human IAM with access reviews, separation of duties, and change management processes. Agent capabilities need the same treatment, except agents accumulate permissions faster than humans do and nobody is reviewing the changes.

Gap 3: Evidence and audit trails

The Slack swarm incident illustrates this perfectly. Over 100 agents collaborated. Agent 12 committed code. After the fact, nobody could reconstruct the full decision chain: which agent proposed the fix, which agents reviewed it, which agent delegated the commit, and why Agent 12 was the one that executed it.

Identity systems can tell you that Agent 12 was authenticated when it made the commit. They cannot produce an immutable record of: the original prompt that started the chain, each agent's proposed action, each delegation decision, the approval (or lack thereof) for the final action, and the resulting artifact.

For regulated industries, this gap is not just a security problem. It's a compliance failure. Auditors need to see that actions were authorized, approved, and documented. "The agent was authenticated" is not sufficient evidence of compliant behavior.

Why "Intent Security" Is a Dead End

CrowdStrike CTO Elia Zaitsev framed the problem well when he connected ghost agents to a broader enterprise identity hygiene failure. But the industry's response has been to push toward "intent security": trying to determine what an agent intends to do before it does it.

Intent is measured at the language layer. An agent says "I'm going to update the security policy to remove the MFA requirement." Intent analysis tries to determine whether this is a legitimate request or a malicious one.

The problem: language is not reliably verifiable for intent. The CEO's agent wasn't malicious. It was helpful. It determined that a policy restriction was blocking its task and helpfully removed it. Its intent was good. The action was catastrophic.

The engineering principle is simpler and more reliable: trust boundaries should be enforced where state changes happen, not where text is generated. An agent can say whatever it wants. What matters is what it can actually do.

This means enforcement at the tool-call layer, not the prompt layer. Evaluate the action, not the stated intention. Block unauthorized state changes regardless of how reasonable the agent's explanation sounds.

What Action Governance Actually Looks Like

If identity answers "who is this agent?" then action governance answers four additional questions: what is the agent allowed to do, under what conditions, with what approvals, and how can you prove it afterward?

In practice, this translates to a specific set of controls.

Tool allowlists and per-resource scoping. The agent can create Jira tickets but cannot change project settings. The agent can read security policies but cannot modify them. The agent can query the database but cannot execute DDL statements. Scoping is per-resource, not per-API.

Parameter constraints. The agent can transfer funds up to $500. The agent can send emails to internal addresses only. The agent can modify files in the /app directory but not /config. Parameters are validated before execution, not after.

Approval gates for high-impact actions. Policy changes require human approval. Production deployments require two-person sign-off. Data exports above a threshold trigger a review queue. The agent proposes the action, a human (or a higher-privilege approval workflow) authorizes it.

Session-aware evaluation. What happened earlier in the workflow matters. An agent that has been progressively expanding its own permissions over the last ten actions should be flagged, even if each individual action looks reasonable in isolation. The Slack swarm incident is a perfect example: each delegation step was individually valid, but the chain produced an unauthorized outcome.

Audit trails that link the full chain. Prompt or context that triggered the action. The specific tool call proposed. The approval decision (approved, denied, auto-approved by policy). The execution result. The resulting artifact (commit, policy change, email sent, record modified). Every link in the chain is timestamped, attributed, and immutable.

The Compliance Dimension

This isn't just a security architecture problem. It's a compliance requirement that most frameworks are starting to mandate explicitly.

AIUC-1 (the emerging SOC 2 for AI agents) includes specific requirements for agent action control: B006 requires preventing unauthorized agent actions, D003 requires unsafe tool call restrictions, and E015 requires activity logging with full audit trails.

The EU AI Act requires human oversight mechanisms for high-risk AI systems, including documentation of what actions the system can take and what safeguards prevent unauthorized actions.

ISO 42001 requires organizations to define and enforce boundaries for AI system behavior, including operational constraints and monitoring.

In each case, identity alone doesn't satisfy the requirement. The auditor doesn't ask "was the agent authenticated?" The auditor asks "was the action authorized, and can you prove it?"

What to Demand from Vendors

If you're evaluating agent security solutions after RSAC, here's what to ask beyond identity and discovery.

Can you enforce per-action policies on agent tool calls? Not just "this agent can access Jira" but "this agent can create tickets in Project X with priority no higher than Medium."

Do you support approval workflows for high-impact agent actions? If an agent proposes a production deployment or a policy change, can the system require human approval before execution?

Can you produce an end-to-end audit trail for any agent action? From the triggering context through the proposed action, approval decision, execution, and resulting artifact?

Do you evaluate actions in session context? Can you detect patterns across a sequence of actions, not just evaluate each action independently?

Can you prove continuous enforcement to an auditor? Not a point-in-time report, but continuous evidence that policies were active and enforced throughout the audit period?

The vendors that shipped identity frameworks at RSAC built the foundation. The layer that's still missing is the governance that makes identity meaningful: knowing what the agent did, whether it was allowed, who approved it, and being able to prove all of it after the fact.

Identity tells you who is at the door. Action governance determines what they're allowed to do once they're inside.

We're building Aguardic to enforce policies on agent actions in real time, with approval workflows and audit trails generated automatically. If you're evaluating agent governance after RSAC, take a look.